Analysis Results¶

3ML stores the results of a fit in a container we call an “Analysis Result” (AR). The structure of this object is designed to be useable in a live sense within an active analysis (python script, ipython interactive shell, jupyter notebook) as well as storable as a FITS file for saving results for later.

The structure is nearly the same between MLE and Bayesian analyses in order to make a seamless functionality between all analyses.

[ ]:

from threeML import *

from threeML.analysis_results import *

from threeML.io.progress_bar import progress_bar

from jupyterthemes import jtplot

%matplotlib inline

jtplot.style(context="talk", fscale=1, ticks=True, grid=False)

import matplotlib.pyplot as plt

plt.style.use("mike")

import astropy.units as u

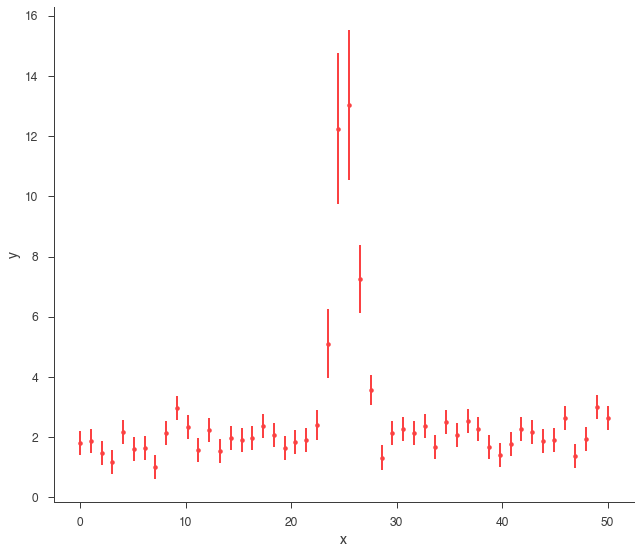

Let’s take a look at what we can do with an AR. First, we will simulate some data.

[2]:

gen_function = Line(a=0.0, b=2) + Gaussian(F=30.0, mu=25.0, sigma=1)

# Generate a dataset using the line and a gaussian.

# constant 20% error

x = np.linspace(0, 50, 50)

xy = XYLike.from_function(

"sim_data", function=gen_function, x=x, yerr=0.2 * gen_function(x)

)

xy.plot()

Using Gaussian statistic (equivalent to chi^2) with the provided errors.

MLE Results¶

First we will demonstrate how AR’s work for an MLE analysis on our synthetic data. As we will see, most of the functionality exists in the Bayesian AR’s as well.

Let’s do a simple likelihood maximization of our data and model.

[3]:

fitfun = Line() + Gaussian()

fitfun.a_1.bounds = (-10, 10.0)

fitfun.b_1.bounds = (-100, 100.0)

fitfun.F_2 = 25.0

fitfun.F_2.bounds = (1e-3, 200.0)

fitfun.mu_2 = 25.0

fitfun.mu_2.bounds = (0.0, 100.0)

fitfun.sigma_2.bounds = (1e-3, 10.0)

model = Model(PointSource("fake", 0.0, 0.0, fitfun))

data = DataList(xy)

jl = JointLikelihood(model, DataList(xy))

_ = jl.fit()

Best fit values:

| result | unit | |

|---|---|---|

| parameter | ||

| fake.spectrum.main.composite.a_1 | (9 +/- 4) x 10^-3 | 1 / (cm2 keV2 s) |

| fake.spectrum.main.composite.b_1 | 1.76 +/- 0.11 | 1 / (cm2 keV s) |

| fake.spectrum.main.composite.F_2 | (3.2 +/- 0.4) x 10 | 1 / (cm2 s) |

| fake.spectrum.main.composite.mu_2 | (2.521 +/- 0.012) x 10 | keV |

| fake.spectrum.main.composite.sigma_2 | 1.09 +/- 0.09 | keV |

Correlation matrix:

| 1.00 | -0.85 | 0.00 | -0.02 | -0.00 |

| -0.85 | 1.00 | -0.05 | 0.02 | -0.08 |

| 0.00 | -0.05 | 1.00 | -0.24 | -0.15 |

| -0.02 | 0.02 | -0.24 | 1.00 | -0.13 |

| -0.00 | -0.08 | -0.15 | -0.13 | 1.00 |

Values of -log(likelihood) at the minimum:

| -log(likelihood) | |

|---|---|

| sim_data | 24.81213 |

| total | 24.81213 |

Values of statistical measures:

| statistical measures | |

|---|---|

| AIC | 60.987896 |

| BIC | 69.184375 |

We can get our errors as always, but the results cannot be propagated (error propagation assumes Gaussian errors, i.e., symmetric errors) In this case though errors are pretty symmetric, so we are likely in the case where the MLE is actually normally distributed.

[4]:

jl.get_errors();

| result | unit | |

|---|---|---|

| parameter | ||

| fake.spectrum.main.composite.a_1 | (9 +/- 4) x 10^-3 | 1 / (cm2 keV2 s) |

| fake.spectrum.main.composite.b_1 | 1.76 +/- 0.11 | 1 / (cm2 keV s) |

| fake.spectrum.main.composite.F_2 | (3.2 +/- 0.4) x 10 | 1 / (cm2 s) |

| fake.spectrum.main.composite.mu_2 | (2.521 -0.011 +0.012) x 10 | keV |

| fake.spectrum.main.composite.sigma_2 | 1.09 +/- 0.09 | keV |

We need to get the AnalysisResults object that is created after a fit is performed. The AR object is a member of the JointLikelihood object

[5]:

ar = jl.results

We can display the results of the analysis. Note, when a fit is performed, the post display is actaully from the internal AR.

[6]:

ar.display()

Best fit values:

| result | unit | |

|---|---|---|

| parameter | ||

| fake.spectrum.main.composite.a_1 | (9 +/- 4) x 10^-3 | 1 / (cm2 keV2 s) |

| fake.spectrum.main.composite.b_1 | 1.76 +/- 0.11 | 1 / (cm2 keV s) |

| fake.spectrum.main.composite.F_2 | (3.2 +/- 0.4) x 10 | 1 / (cm2 s) |

| fake.spectrum.main.composite.mu_2 | (2.521 +/- 0.012) x 10 | keV |

| fake.spectrum.main.composite.sigma_2 | 1.09 +/- 0.09 | keV |

Correlation matrix:

| 1.00 | -0.85 | 0.00 | -0.02 | -0.00 |

| -0.85 | 1.00 | -0.05 | 0.02 | -0.08 |

| 0.00 | -0.05 | 1.00 | -0.24 | -0.15 |

| -0.02 | 0.02 | -0.24 | 1.00 | -0.13 |

| -0.00 | -0.08 | -0.15 | -0.13 | 1.00 |

Values of -log(likelihood) at the minimum:

| -log(likelihood) | |

|---|---|

| sim_data | 24.81213 |

| total | 24.81213 |

Values of statistical measures:

| statistical measures | |

|---|---|

| AIC | 60.987896 |

| BIC | 69.184375 |

By default, the equal tail intervals are displayed. We can instead display highest posterior densities (equal in the MLE case)

[7]:

ar.display("hpd")

Best fit values:

| result | unit | |

|---|---|---|

| parameter | ||

| fake.spectrum.main.composite.a_1 | (9 +/- 4) x 10^-3 | 1 / (cm2 keV2 s) |

| fake.spectrum.main.composite.b_1 | 1.76 +/- 0.11 | 1 / (cm2 keV s) |

| fake.spectrum.main.composite.F_2 | (3.2 +/- 0.4) x 10 | 1 / (cm2 s) |

| fake.spectrum.main.composite.mu_2 | (2.521 +/- 0.012) x 10 | keV |

| fake.spectrum.main.composite.sigma_2 | 1.09 +/- 0.09 | keV |

Correlation matrix:

| 1.00 | -0.85 | 0.00 | -0.02 | -0.00 |

| -0.85 | 1.00 | -0.05 | 0.02 | -0.08 |

| 0.00 | -0.05 | 1.00 | -0.24 | -0.15 |

| -0.02 | 0.02 | -0.24 | 1.00 | -0.13 |

| -0.00 | -0.08 | -0.15 | -0.13 | 1.00 |

Values of -log(likelihood) at the minimum:

| -log(likelihood) | |

|---|---|

| sim_data | 24.81213 |

| total | 24.81213 |

Values of statistical measures:

| statistical measures | |

|---|---|

| AIC | 60.987896 |

| BIC | 69.184375 |

The AR stores several properties from the analysis:

[8]:

ar.analysis_type

[8]:

'MLE'

[9]:

ar.covariance_matrix

[9]:

array([[ 1.47801527e-05, -3.69124668e-04, 2.79048679e-05,

-1.05067384e-05, -1.38912356e-06],

[-3.69124668e-04, 1.28732318e-02, -2.17478045e-02,

3.18981090e-04, -7.90107253e-04],

[ 2.79048679e-05, -2.17478045e-02, 1.55285025e+01,

-1.09924522e-01, -5.24718986e-02],

[-1.05067384e-05, 3.18981090e-04, -1.09924522e-01,

1.34279848e-02, -1.35822852e-03],

[-1.38912356e-06, -7.90107253e-04, -5.24718986e-02,

-1.35822852e-03, 7.61121218e-03]])

[10]:

ar.get_point_source_flux(1*u.keV, .1*u.MeV)

WARNING UserWarning: get_point_source_flux() has been replaced by get_flux()

| flux | |

|---|---|

| fake: total | (2.00 -0.21 +0.22) x 10^-5 erg / (cm2 s) |

[10]:

| flux | low bound | hi bound | |

|---|---|---|---|

| fake: total | 2.0042122454660008e-05 erg / (cm2 s) | 1.7945578355340123e-05 erg / (cm2 s) | 2.2218485618241006e-05 erg / (cm2 s) |

[11]:

ar.optimized_model

[11]:

| N | |

|---|---|

| Point sources | 1 |

| Extended sources | 0 |

| Particle sources | 0 |

Free parameters (5):

| value | min_value | max_value | unit | |

|---|---|---|---|---|

| fake.spectrum.main.composite.a_1 | 0.00877149 | -10 | 10 | s-1 cm-2 keV-2 |

| fake.spectrum.main.composite.b_1 | 1.76234 | -100 | 100 | keV-1 s-1 cm-2 |

| fake.spectrum.main.composite.F_2 | 32.0749 | 0.001 | 200 | s-1 cm-2 |

| fake.spectrum.main.composite.mu_2 | 25.2136 | 0 | 100 | keV |

| fake.spectrum.main.composite.sigma_2 | 1.09261 | 0.001 | 10 | keV |

Fixed parameters (2):

(abridged. Use complete=True to see all fixed parameters)

Linked parameters (0):

(none)

Independent variables:

(none)

Saving results to disk¶

The beauty of the analysis result is that all of this information can be written to disk and restored at a later time. The statistical parameters, best-fit model, etc. can all be recovered.

AR’s are stored as a structured FITS file. We write the AR like this:

[12]:

ar.write_to("test_mle.fits", overwrite=True)

WARNING: VerifyWarning: Card is too long, comment will be truncated. [astropy.io.fits.card]

WARNING:astropy:VerifyWarning: Card is too long, comment will be truncated.

The FITS file can be examines with any normal FITS reader.

[13]:

import astropy.io.fits as fits

[14]:

ar_fits = fits.open('test_mle.fits')

ar_fits.info()

Filename: test_mle.fits

No. Name Ver Type Cards Dimensions Format

0 PRIMARY 1 PrimaryHDU 6 ()

1 ANALYSIS_RESULTS 1 BinTableHDU 36 5R x 8C [36A, D, D, D, D, 16A, 5D, D]

However, to easily pull the results back into the 3ML framework, we use the \({\tt load\_analysis\_results}\) function:

[15]:

ar_reloaded = load_analysis_results("test_mle.fits")

WARNING YAMLLoadWarning: calling yaml.load() without Loader=... is deprecated, as the default Loader is unsafe. Please read https://msg.pyyaml.org/load for full details.

[16]:

ar_reloaded.get_statistic_frame()

[16]:

| -log(likelihood) | |

|---|---|

| sim_data | 24.81213 |

| total | 24.81213 |

You can get a DataFrame with the saved results:

[17]:

ar_reloaded.get_data_frame()

[17]:

| value | negative_error | positive_error | error | unit | |

|---|---|---|---|---|---|

| fake.spectrum.main.composite.a_1 | 0.008771 | -0.003853 | 0.003748 | 0.003801 | 1 / (cm2 keV2 s) |

| fake.spectrum.main.composite.b_1 | 1.762337 | -0.113744 | 0.113748 | 0.113746 | 1 / (cm2 keV s) |

| fake.spectrum.main.composite.F_2 | 32.074949 | -3.894620 | 3.847490 | 3.871055 | 1 / (cm2 s) |

| fake.spectrum.main.composite.mu_2 | 25.213581 | -0.112303 | 0.113055 | 0.112679 | keV |

| fake.spectrum.main.composite.sigma_2 | 1.092612 | -0.087786 | 0.084940 | 0.086363 | keV |

Analysis Result Sets¶

When doing time-resolved analysis or analysing a several objects, we can save several AR’s is a set. This is achieved with the analysis result set. We can pass an array of AR’s to the set and even set up descriptions for the different entries.

[18]:

from threeML.analysis_results import AnalysisResultsSet

analysis_set = AnalysisResultsSet([ar, ar_reloaded])

# index as time bins

analysis_set.set_bins("testing", [-1, 1], [3, 5], unit="s")

# write to disk

analysis_set.write_to("analysis_set_test.fits", overwrite=True)

WARNING: VerifyWarning: Card is too long, comment will be truncated. [astropy.io.fits.card]

WARNING:astropy:VerifyWarning: Card is too long, comment will be truncated.

[19]:

analysis_set = load_analysis_results("analysis_set_test.fits")

WARNING YAMLLoadWarning: calling yaml.load() without Loader=... is deprecated, as the default Loader is unsafe. Please read https://msg.pyyaml.org/load for full details.

WARNING YAMLLoadWarning: calling yaml.load() without Loader=... is deprecated, as the default Loader is unsafe. Please read https://msg.pyyaml.org/load for full details.

[20]:

analysis_set[0].display()

Best fit values:

| result | unit | |

|---|---|---|

| parameter | ||

| fake.spectrum.main.composite.a_1 | (9 +/- 4) x 10^-3 | 1 / (cm2 keV2 s) |

| fake.spectrum.main.composite.b_1 | 1.76 +/- 0.11 | 1 / (cm2 keV s) |

| fake.spectrum.main.composite.F_2 | (3.2 +/- 0.4) x 10 | 1 / (cm2 s) |

| fake.spectrum.main.composite.mu_2 | (2.521 +/- 0.012) x 10 | keV |

| fake.spectrum.main.composite.sigma_2 | 1.09 +/- 0.09 | keV |

Correlation matrix:

| 1.00 | -0.85 | 0.00 | -0.02 | -0.00 |

| -0.85 | 1.00 | -0.05 | 0.02 | -0.08 |

| 0.00 | -0.05 | 1.00 | -0.24 | -0.15 |

| -0.02 | 0.02 | -0.24 | 1.00 | -0.13 |

| -0.00 | -0.08 | -0.15 | -0.13 | 1.00 |

Values of -log(likelihood) at the minimum:

| -log(likelihood) | |

|---|---|

| sim_data | 24.81213 |

| total | 24.81213 |

Values of statistical measures:

| statistical measures | |

|---|---|

| AIC | 60.987896 |

| BIC | 69.184375 |

Error propagation¶

In 3ML, we propagate errors for MLE reults via sampling of the covariance matrix instead of Taylor exanding around the maximum of the likelihood and computing a jacobain. Thus, we can achieve non-linear error propagation.

You can use the results for propagating errors non-linearly for analytical functions:

[21]:

p1 = ar.get_variates("fake.spectrum.main.composite.a_1")

p2 = ar.get_variates("fake.spectrum.main.composite.b_1")

print("Propagating a+b, with a and b respectively:")

print(p1)

print(p2)

print("\nThis is the result (with errors):")

res = p1 + p2

print(res)

print(res.equal_tail_interval())

Propagating a+b, with a and b respectively:

equal-tail: (9 +/- 4) x 10^-3, hpd: (9 +/- 4) x 10^-3

equal-tail: 1.76 +/- 0.11, hpd: 1.76 -0.12 +0.11

This is the result (with errors):

equal-tail: 1.77 +/- 0.11, hpd: 1.77 -0.12 +0.10

(1.6607512376061622, 1.8806969631644799)

The propagation accounts for covariances. For example this has error of zero (of course) since there is perfect covariance.

[22]:

print("\nThis is 50 * a/a:")

print(50 * p1/p1)

This is 50 * a/a:

equal-tail: (5.0 +/- 0) x 10, hpd: (5.0 +/- 0) x 10

WARNING RuntimeWarning: divide by zero encountered in log10

WARNING RuntimeWarning: divide by zero encountered in log10

WARNING RuntimeWarning: divide by zero encountered in log10

WARNING RuntimeWarning: divide by zero encountered in log10

You can use arbitrary (np) functions

[23]:

print("\nThis is arcsinh(a + 5*b) / np.log10(b) (why not?)")

print(np.arcsinh(p1 + 5 * p2) / np.log10(p2))

This is arcsinh(a + 5*b) / np.log10(b) (why not?)

equal-tail: (1.17 -0.09 +0.12) x 10, hpd: (1.17 -0.11 +0.10) x 10

Errors can become asymmetric. For example, the ratio of two gaussians is asymmetric notoriously:

[24]:

print("\nRatio a/b:")

print(p2 / p1)

Ratio a/b:

equal-tail: (2.0 -0.7 +1.6) x 10^2, hpd: (2.0 -1.0 +0.7) x 10^2

You can always use it with arbitrary functions:

[25]:

def my_function(x, a, b):

return b * x ** a

print("\nPropagating using a custom function:")

print(my_function(2.3, p1, p2))

Propagating using a custom function:

equal-tail: 1.77 +/- 0.11, hpd: 1.77 +/- 0.11

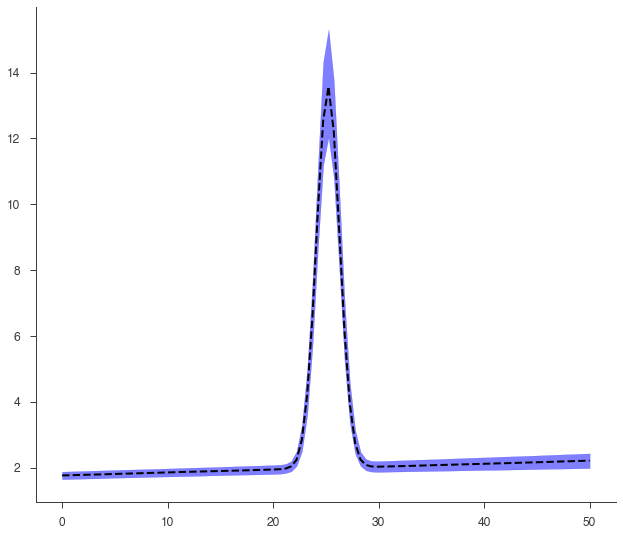

This is an example of an error propagation to get the plot of the model with its errors (which are propagated without assuming linearity on parameters)

[26]:

def go(fitfun, ar, model):

fig, ax = plt.subplots()

# Gather the parameter variates

arguments = {}

for par in fitfun.parameters.values():

if par.free:

this_name = par.name

this_variate = ar.get_variates(par.path)

# Do not use more than 1000 values (would make computation too slow for nothing)

if len(this_variate) > 1000:

this_variate = np.random.choice(this_variate, size=1000)

arguments[this_name] = this_variate

# Prepare the error propagator function

pp = ar.propagate(

ar.optimized_model.fake.spectrum.main.shape.evaluate_at, **arguments

)

# You can just use it as:

print(pp(5.0))

# Make the plot

energies = np.linspace(0, 50, 100)

low_curve = np.zeros_like(energies)

middle_curve = np.zeros_like(energies)

hi_curve = np.zeros_like(energies)

free_parameters = model.free_parameters

with progress_bar(len(energies), title="Propagating errors") as p:

with use_astromodels_memoization(False):

for i, e in enumerate(energies):

this_flux = pp(e)

low_bound, hi_bound = this_flux.equal_tail_interval()

low_curve[i], middle_curve[i], hi_curve[i] = (

low_bound,

this_flux.median,

hi_bound,

)

p.increase()

ax.plot(energies, middle_curve, "--", color="black")

ax.fill_between(energies, low_curve, hi_curve, alpha=0.5, color="blue")

[27]:

go(fitfun, ar, model)

equal-tail: 1.81 -0.11 +0.12, hpd: 1.81 -0.09 +0.14

Bayesian Analysis Results¶

Analysis Results work exactly the same under Bayesian analysis.

Let’s run the analysis first.

[28]:

for parameter in ar.optimized_model:

model[parameter.path].value = parameter.value

model.fake.spectrum.main.composite.a_1.set_uninformative_prior(Uniform_prior)

model.fake.spectrum.main.composite.b_1.set_uninformative_prior(Uniform_prior)

model.fake.spectrum.main.composite.F_2.set_uninformative_prior(Log_uniform_prior)

model.fake.spectrum.main.composite.mu_2.set_uninformative_prior(Uniform_prior)

model.fake.spectrum.main.composite.sigma_2.set_uninformative_prior(Log_uniform_prior)

bs = BayesianAnalysis(model, data)

samples = bs.sample(20, 100, 1000)

---------------------------------------------------------------------------

TypeError Traceback (most recent call last)

<ipython-input-28-880e2c1bc7df> in <module>

11 bs = BayesianAnalysis(model, data)

12

---> 13 samples = bs.sample(20, 100, 1000)

TypeError: sample() takes from 1 to 2 positional arguments but 4 were given

Again, we grab the results from the BayesianAnalysis object:

[ ]:

ar2 = bs.results

We can write and read the results to/from a file:

[ ]:

ar2.write_to("test_bayes.fits", overwrite=True)

[ ]:

ar2_reloaded = load_analysis_results("test_bayes.fits")

The AR holds the posterior samples from the analysis. We can see the saved and live reults are the same:

[ ]:

np.allclose(ar2_reloaded.samples, ar2.samples)

NOTE: MLE AR’s store samples as well. These are the samples from the covariance matrix

We can examine the marginal distributions of the parameters:

[ ]:

#ar2.corner_plot();

# with chain consumer (pretty!)

ar2.corner_plot_cc();

We can return pandas DataFrames with equal tail or HPD results.

[ ]:

ar2.get_data_frame("equal tail")

[ ]:

ar2.get_data_frame("hpd")

Error propagation operates the same way. Internally, the process is the same as the MLE results, however, the samples are those of the posterior rather than the (assumed) covariance matrix.

[ ]:

p1 = ar2.get_variates("fake.spectrum.main.composite.a_1")

p2 = ar2.get_variates("fake.spectrum.main.composite.b_1")

print(p1)

print(p2)

res = p1 + p2

print(res)

To demonstrate how the two objects (MLE and Bayes) are the same, we see that our plotting function written for the MLE result works on our Bayesian results seamlessly.

[ ]:

go(fitfun, ar2, model)