Example joint fit between GBM and Swift BAT¶

One of the key features of 3ML is the abil ity to fit multi-messenger data properly. A simple example of this is the joint fitting of two instruments whose data obey different likelihoods. Here, we have GBM data which obey a Poisson-Gaussian profile likelihoog ( PGSTAT in XSPEC lingo) and Swift BAT which data which are the result of a “fit” via a coded mask and hence obey a Gaussian ( \(\chi^2\) ) likelihood.

[5]:

%matplotlib inline

import matplotlib.pyplot as plt

from jupyterthemes import jtplot

jtplot.style(context="talk", fscale=1, ticks=True, grid=False)

plt.style.use("mike")

from threeML import *

from threeML.io.package_data import get_path_of_data_file

import os

import warnings

warnings.simplefilter("ignore")

Plugin setup¶

We have data from the same time interval from Swift BAT and a GBM NAI and BGO detector. We have preprocessed GBM data to so that it is OGIP compliant. (Remember that we can handle the raw data with the TimeSeriesBuilder). Thus, we will use the OGIPLike plugin to read in each dataset, make energy selections and examine the raw count spectra.

Swift BAT¶

[7]:

bat_pha = get_path_of_data_file("datasets/bat/gbm_bat_joint_BAT.pha")

bat_rsp = get_path_of_data_file("datasets/bat/gbm_bat_joint_BAT.rsp")

bat = OGIPLike("BAT", observation=bat_pha, response=bat_rsp)

bat.set_active_measurements("15-150")

bat.view_count_spectrum()

Auto-probed noise models:

- observation: gaussian

- background: None

Range 15-150 translates to channels 3-62

Now using 60 channels out of 80

Fermi GBM¶

[8]:

nai6 = OGIPLike(

"n6",

get_path_of_data_file("datasets/gbm/gbm_bat_joint_NAI_06.pha"),

get_path_of_data_file("datasets/gbm/gbm_bat_joint_NAI_06.bak"),

get_path_of_data_file("datasets/gbm/gbm_bat_joint_NAI_06.rsp"),

spectrum_number=1,

)

nai6.set_active_measurements("8-900")

nai6.view_count_spectrum()

bgo0 = OGIPLike(

"b0",

get_path_of_data_file("datasets/gbm/gbm_bat_joint_BGO_00.pha"),

get_path_of_data_file("datasets/gbm/gbm_bat_joint_BGO_00.bak"),

get_path_of_data_file("datasets/gbm/gbm_bat_joint_BGO_00.rsp"),

spectrum_number=1,

)

bgo0.set_active_measurements("250-30000")

bgo0.view_count_spectrum()

Auto-probed noise models:

- observation: poisson

- background: gaussian

Range 8-900 translates to channels 2-124

Now using 123 channels out of 128

Auto-probed noise models:

- observation: poisson

- background: gaussian

Range 250-30000 translates to channels 1-119

Now using 119 channels out of 128

Model setup¶

We setup up or spectrum and likelihood model and combine the data. 3ML will automatically assign the proper likelihood to each data set. At first, we will assume a perfect calibration between the different detectors and not a apply a so-called effective area correction.

[9]:

band = Band()

model_no_eac = Model(PointSource("joint_fit_no_eac", 0, 0, spectral_shape=band))

Spectral fitting¶

Now we simply fit the data by building the data list, creating the joint likelihood and running the fit.

No effective area correction¶

[10]:

data_list = DataList(bat, nai6, bgo0)

jl_no_eac = JointLikelihood(model_no_eac, data_list)

jl_no_eac.fit()

Best fit values:

| result | unit | |

|---|---|---|

| parameter | ||

| joint_fit_no_eac.spectrum.main.Band.K | (2.75 +/- 0.06) x 10^-2 | 1 / (cm2 keV s) |

| joint_fit_no_eac.spectrum.main.Band.alpha | -1.029 +/- 0.017 | |

| joint_fit_no_eac.spectrum.main.Band.xp | (5.7 +/- 0.4) x 10^2 | keV |

| joint_fit_no_eac.spectrum.main.Band.beta | -2.41 +/- 0.18 |

Correlation matrix:

| 1.00 | 0.90 | -0.85 | 0.14 |

| 0.90 | 1.00 | -0.73 | 0.08 |

| -0.85 | -0.73 | 1.00 | -0.32 |

| 0.14 | 0.08 | -0.32 | 1.00 |

Values of -log(likelihood) at the minimum:

| -log(likelihood) | |

|---|---|

| BAT | 53.044658 |

| b0 | 553.071650 |

| n6 | 761.174809 |

| total | 1367.291118 |

Values of statistical measures:

| statistical measures | |

|---|---|

| AIC | 2742.716915 |

| BIC | 2757.423943 |

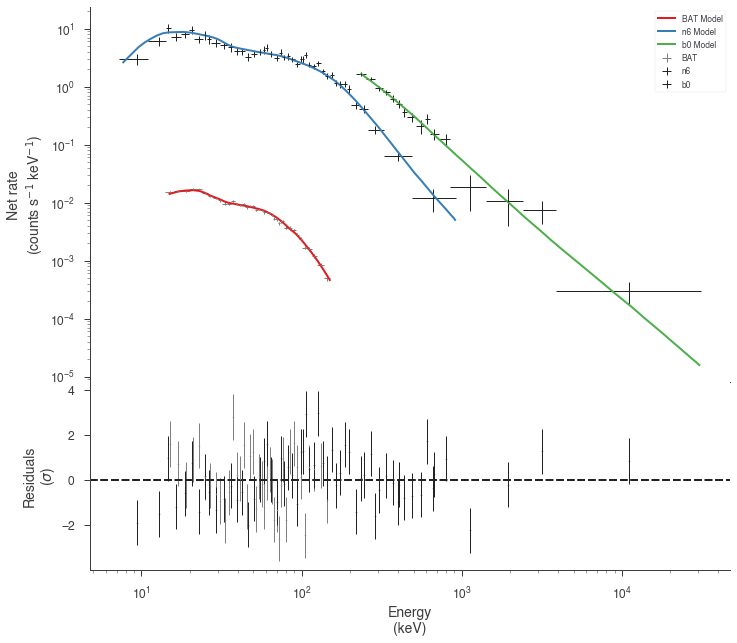

The fit has resulted in a very typical Band function fit. Let’s look in count space at how good of a fit we have obtained.

[11]:

threeML_config["ogip"]["model plot cmap"] = "Set1"

[12]:

display_spectrum_model_counts(

jl_no_eac, step=False, min_rate=[0.01, 10.0, 10.0], data_colors=["grey", "k", "k"]

)

It seems that the effective areas between GBM and BAT do not agree! We can look at the goodness of fit for the various data sets.

[14]:

gof_object = GoodnessOfFit(jl_no_eac)

with parallel_computation():

gof, res_frame, lh_frame = gof_object.by_mc(n_iterations=8000)

Starting ipyparallel cluster with this command line:

/Users/jburgess/.environs/3ml/bin/ipcluster start

Waiting for connection file: ~/.ipython/profile_default/security/ipcontroller-client.json

12 engines are active

Shutting down ipcluster...

[15]:

import pandas as pd

pd.Series(gof)

[15]:

total 0.000000

BAT 0.000125

n6 0.000000

b0 0.560125

dtype: float64

Both the GBM NaI detector and Swift BAT exhibit poor GOF.

With effective are correction¶

Now let’s add an effective area correction between the detectors to see if this fixes the problem. The effective area is a nuissance parameter that attempts to model systematic problems in a instruments calibration. It simply scales the counts of an instrument by a multiplicative factor. It cannot handle more complicated energy dependent

[16]:

# turn on the effective area correction and set it's bounds

nai6.use_effective_area_correction(0.2, 1.8)

bgo0.use_effective_area_correction(0.2, 1.8)

model_eac = Model(PointSource("joint_fit_eac", 0, 0, spectral_shape=band))

jl_eac = JointLikelihood(model_eac, data_list)

jl_eac.fit()

Best fit values:

| result | unit | |

|---|---|---|

| parameter | ||

| joint_fit_eac.spectrum.main.Band.K | (2.98 +/- 0.12) x 10^-2 | 1 / (cm2 keV s) |

| joint_fit_eac.spectrum.main.Band.alpha | (-9.84 +/- 0.26) x 10^-1 | |

| joint_fit_eac.spectrum.main.Band.xp | (3.31 +/- 0.32) x 10^2 | keV |

| joint_fit_eac.spectrum.main.Band.beta | -2.36 +/- 0.15 | |

| cons_n6 | 1.56 +/- 0.04 | |

| cons_b0 | 1.41 +/- 0.10 |

Correlation matrix:

| 1.00 | 0.95 | -0.92 | 0.31 | 0.29 | 0.62 |

| 0.95 | 1.00 | -0.82 | 0.26 | 0.26 | 0.52 |

| -0.92 | -0.82 | 1.00 | -0.36 | -0.45 | -0.77 |

| 0.31 | 0.26 | -0.36 | 1.00 | 0.04 | -0.03 |

| 0.29 | 0.26 | -0.45 | 0.04 | 1.00 | 0.47 |

| 0.62 | 0.52 | -0.77 | -0.03 | 0.47 | 1.00 |

Values of -log(likelihood) at the minimum:

| -log(likelihood) | |

|---|---|

| BAT | 40.078874 |

| b0 | 544.747650 |

| n6 | 644.705650 |

| total | 1229.532174 |

Values of statistical measures:

| statistical measures | |

|---|---|

| AIC | 2471.349094 |

| BIC | 2493.326910 |

Now we have a much better fit to all data sets

[17]:

display_spectrum_model_counts(

jl_eac, step=False, min_rate=[0.01, 10.0, 10.0], data_colors=["grey", "k", "k"]

)

[18]:

gof_object = GoodnessOfFit(jl_eac)

with parallel_computation():

gof, res_frame, lh_frame = gof_object.by_mc(

n_iterations=8000, continue_on_failure=True

)

Starting ipyparallel cluster with this command line:

/Users/jburgess/.environs/3ml/bin/ipcluster start

Waiting for connection file: ~/.ipython/profile_default/security/ipcontroller-client.json

12 engines are active

Shutting down ipcluster...

[19]:

import pandas as pd

pd.Series(gof)

[19]:

total 0.048750

BAT 0.028375

n6 0.017500

b0 0.841625

dtype: float64

Examining the differences¶

Let’s plot the fits in model space and see how different the resulting models are.

[20]:

plot_spectra(

jl_eac.results,

jl_no_eac.results,

fit_cmap="Set1",

contour_cmap="Set1",

flux_unit="erg2/(keV s cm2)",

equal_tailed=True,

)

We can easily see that the models are different